RYOULIVE.AI

NEWS

The Piano Has Already Been Built

By Arne Lefalk

Foreword

For a long time, I felt something was off. Not broken in an obvious way, not failing in a way people could point to, just… misaligned. The digital world kept getting faster, smarter, more impressive. Yet underneath it all, something fundamental didn’t add up. We were scaling intelligence, but not control. We were generating value, but not ownership. We were becoming more connected, yet more dependent. And because everything “worked,” almost no one questioned it. But working is not the same as being right. This is about what happens when you realize that the foundation itself is the problem, not just the layers built on top of it.

We Built Recordings, Not Instruments

What we call AI today is extraordinary, but at its core, it is still a recording. It predicts, recombines, and plays back. It responds to prompts, processes information, and delivers results through systems that are not yours, running on infrastructure you don’t control. It is powerful, but it is not personal in the way it should be. So I asked a different question. What if AI wasn’t a recording? What if it was an instrument? A recording gives the same output to everyone. An instrument becomes something entirely different depending on who is playing it. That idea became the starting point. Because the future of intelligence should not be about access to someone else’s system, it should be about alignment with your own.

The Problem Was Never Intelligence

Back in 2015, I became convinced that we were solving the wrong problem. Everyone was focused on making systems smarter, more data, more compute, more scale. But the real limitation wasn’t intelligence, it was structure. Traditional computing moves data back and forth between memory and processing, creating delay, friction, and energy loss. At small scale, it’s manageable. At global scale, it becomes the bottleneck. Today, we’re seeing the consequences. AI systems consume enormous amounts of energy. Entire data centers exist just to keep up with computational demand. We are burning massive resources to simulate intelligence, and that approach does not scale forever. So I started from a different premise. What if intelligence doesn’t need more brute force? What if it needs a different kind of coordination?

From Calculation to Resonance

Instead of treating data as something that must constantly be moved and processed, I explored what happens when systems are aligned instead of sequential. Not calculated step by step, but tuned. The simplest way to understand it is through music. You don’t calculate harmony, you create it. You don’t compute resonance, you align frequencies until something clicks. That shift led to a different kind of architecture, one where identity, data, and distribution are not separate layers, but part of the same system. When those elements align, the system stops behaving like a tool. It starts behaving like an extension of you.

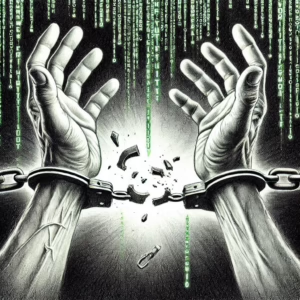

Identity Is the Center of Everything

If you want to change how systems behave, you have to start with identity. Today, your identity is fragmented and rented. You log in through platforms, authenticate through third parties, store your digital life in systems you do not control. It works, but it comes at a cost. You can be locked out, tracked, replaced. That is not ownership, that is access. So I built from a different assumption. Identity should not be something you borrow, it should be something the system aligns to. When identity becomes foundational, everything else can be built around it, security, interaction, value, trust.

Trust Cannot Be Added Later

We often talk about trust as if it were a feature, something you add once the system is built. Encryption, authentication, permissions. But trust doesn’t work that way. If the structure is wrong, no amount of layers will fix it. You can patch vulnerabilities, reduce risk, delay failure, but you cannot create true trust on top of a flawed foundation. Trust has to emerge from the architecture itself. That means reducing exposure, reducing dependency, minimizing unnecessary movement of data. It means designing systems that do less, but do it in a way that is fundamentally aligned with the user, not the platform.

From Assistant to Proxy

Most AI today helps you, but it still sits outside of you. It suggests, drafts, recommends, and when something actually matters, you step back in. The next phase is different. AI should not just assist you, it should represent you, within clear boundaries, with verifiable alignment to your identity. Not as a replacement for human decision-making, but as an extension of it. That shift changes everything. Because once systems begin to act on your behalf, the question is no longer what they can do, it is whether they can be trusted to do it.

Why This Moment Matters

For years, the answer to every limitation in technology was the same, add more. More data, more compute, more infrastructure. Now we are starting to see the limits of that thinking. Energy is becoming a constraint. Data ownership is becoming a conflict. Trust is becoming the real bottleneck. And people are beginning to ask a deeper question, not just what technology can do, but who it is actually built for.

The Shift Few People Are Talking About

If this sounds unlikely, consider this. Twenty years ago, the idea that you would carry a supercomputer in your pocket, communicate globally in real time, and interact daily with intelligent systems would have sounded unrealistic. Today, it is normal. Not because it was obvious, but because the underlying architecture changed. That is what is happening again. Quietly. Before it becomes obvious.

Closing Reflection

So instead of ending with answers, let me leave you with questions, not the kind you scroll past, but the kind that stay with you.

- If AI becomes the operating layer of society, who actually controls it, you, or the platforms that host it?

- If your digital identity can be turned off, suspended, or monetized by someone else, is it really yours?

- If intelligence continues to scale but ownership does not, who truly benefits from the future we are building?

- If systems know everything about you, but you control very little of them, is that progress, or just a more comfortable form of dependency?

- If trust has to be engineered into the foundation, why are we still trying to add it afterward?

- If your digital self could act on your behalf, make decisions, transact, negotiate, would you trust it, and more importantly, who ensured that you could?

If the infrastructure shaping your life is invisible, how do you know whose interests it serves?

And perhaps the most important question of all…